New user training: Difference between revisions

No edit summary |

No edit summary |

||

| (99 intermediate revisions by 8 users not shown) | |||

| Line 1: | Line 1: | ||

{{obsolete|training/new_user_training/}} | |||

This page mirrors and expands upon the content provided in the | {|align=right | ||

|__TOC__ | |||

|} | |||

This page mirrors and expands upon the content provided in the '''HiPerGator Account Training in Coursera (No longer available)'''. | |||

Please see https://docs.rc.ufl.edu/training/new_user_training/ for the new version of the training. | |||

# Recognize the role of Research Computing, utilize HiPerGator as a research tool and select appropriate resource allocations for analyses | |||

# | |||

# Describe appropriate use of the login servers and how to request resources for work beyond those limits | |||

Effective January 11th, 2021, this training module is required for all new account holders to obtain an account. New accounts will not be created until Coursera successfully completes the training. | |||

==Training Objectives== | |||

# Recognize the role of Research Computing, utilize HiPerGator as a research tool, and select appropriate resource allocations for analyses | |||

# Login to HiPerGator using an ssh client | |||

# Describe the appropriate use of the login servers and how to request resources for work beyond those limits | |||

# Describe HiPerGator's three main storage systems and the appropriate use for each | # Describe HiPerGator's three main storage systems and the appropriate use for each | ||

# Use the module system for loading application environments | # Use the module system for loading application environments | ||

| Line 13: | Line 22: | ||

---- | |||

==Module 1: Introduction to Research Computing and HiPerGator== | |||

===HiPerGator=== | |||

* | * About 70,000 cores [[File:Hpg3-rc-header.png|300px|right|text-top|alt="A row of HiPerGator 3 compute node racks"]] | ||

* Hundreds of GPUs | * Hundreds of GPUs | ||

* 10 Petabytes of storage | * 10 Petabytes of storage | ||

* | * The HiPerGator AI cluster has [[File:Hpgai.slider.png|300px|right|text-top|alt="A photo of a row of HiPerGator AI servers"]] | ||

** 1,120 NVIDIA A100 GPUs | ** 1,120 NVIDIA A100 GPUs | ||

** 17,000 AMD Rome Epyc Cores | ** 17,000 AMD Rome Epyc Cores | ||

For additional information visit our website: https://www.rc.ufl.edu/ | For additional information, visit our website: https://www.rc.ufl.edu/ | ||

The [https://www.rc.ufl.edu/about/cluster-history/ Cluster History page] has updated information on the current and historical hardware at Research Computing. | |||

{{Note|'''Summary:''' HiPerGator is a large, high-performance compute cluster capable of tackling some of the largest computational challenges, but users need to understand how to responsibly and efficiently use the resources.|note}} | |||

===Investor Supported=== | |||

HiPerGator is heavily subsidized by the university, but we do require faculty researchers to make investments for access. Research Computing sell three main products: | HiPerGator is heavily subsidized by the university, but we do require faculty researchers to make investments for access. Research Computing sell three main products: | ||

# Compute: NCUs (Normalized Compute Units) | # Compute: NCUs (Normalized Compute Units)[[File:Cpu_storage_gpu.png|300px|right|alt="A composite image showing a CPU, a DDN storage cabinet and an NVIDIA A100 front plate"]] | ||

#* 1 CPU core and | #* 1 CPU core and 7.8 GB of RAM (updated Jan 2021) | ||

# Storage: | # Storage: | ||

#* Blue: High-performance | #* Blue (<code>/blue</code>): High-performance storage for most data during analyses | ||

#* Orange: Intended for archival use | #* Orange (<code>/orange</code>): Intended for archival use | ||

# GPUs | # GPUs | ||

#* Sold in units of GPU cards | #* Sold in units of GPU cards | ||

| Line 39: | Line 51: | ||

Investments can either be '''hardware''' investments, lasting for 5-years or '''service''' investments lasting 3-months or longer. | Investments can either be '''hardware''' investments, lasting for 5-years or '''service''' investments lasting 3-months or longer. | ||

* Price sheets are located here: https://www.rc.ufl.edu/ | * Price sheets are located here: https://www.rc.ufl.edu/get-started/price-list/ | ||

* Submit a purchase request | * Submit a purchase request for: | ||

** [https://gravity.rc.ufl.edu/access/purchase-request/hpg-hardware/ Hardware (5-years)] | |||

** [https://gravity.rc.ufl.edu/access/purchase-request/hpg-service/ Services (3-months to longer)] | |||

* For a full explanation of services offered by Research Computing, see: https://www.rc.ufl.edu/services/ | |||

====Cluster Components | ==Module 2: How to Access and Run Jobs== | ||

===Cluster Components=== | |||

# [[Development and Testing|Login servers]] | # [[Development and Testing|Login servers]] | ||

# [[Sample SLURM Scripts|SLURM Scheduler]] | # [[Sample SLURM Scripts|SLURM Scheduler]] | ||

# [https://www.rc.ufl.edu/about/cluster-history/ Compute Cluster] | # [https://www.rc.ufl.edu/about/cluster-history/ Compute Cluster] | ||

===Accessing HiPerGator=== | |||

* [[Training#Connecting_to_HiPerGator|ssh to host hpg.rc.ufl.edu]] | * [[Training Videos#Connecting_to_HiPerGator|ssh to host hpg.rc.ufl.edu]] | ||

** [[File:Play_icon.png|frameless|30px|link=https://mediasite.video.ufl.edu/Mediasite/Play/89b780ef71094f568a6c091953445ff31d]][https://mediasite.video.ufl.edu/Mediasite/Play/89b780ef71094f568a6c091953445ff31d Watch a video on connecting from MacOS.] | |||

** [[File:Play_icon.png|frameless|30px|link=https://mediasite.video.ufl.edu/Mediasite/Play/227c2cae1147422c91afff28435a51ac1d]][https://mediasite.video.ufl.edu/Mediasite/Play/227c2cae1147422c91afff28435a51ac1d Watch a video on connecting from Windows.] | |||

* [https://jhub.rc.ufl.edu/ jhub.rc.ufl.edu] (requires UF Network) | * [https://jhub.rc.ufl.edu/ jhub.rc.ufl.edu] (requires UF Network) | ||

** See also [[ | ** See also [[File:Play_icon.png|frameless|30px|link=https://mediasite.video.ufl.edu/Mediasite/Play/8efcf534ef3c408e9238d8deeeda083a1d]][[https://mediasite.video.ufl.edu/Mediasite/Play/8efcf534ef3c408e9238d8deeeda083a1d|overview video]]. | ||

* [https://galaxy.rc.ufl.edu/ galaxy.rc.ufl.edu] | * [https://galaxy.rc.ufl.edu/ galaxy.rc.ufl.edu] | ||

* Open on Demand: [https://ood.rc.ufl.edu/ ood.rc.ufl.edu] (requires UF Network) | * Open on Demand: [https://ood.rc.ufl.edu/ ood.rc.ufl.edu] (requires UF Network) | ||

** See also [[ | ** See also [[File:Play_icon.png|frameless|30px|link=https://mediasite.video.ufl.edu/Mediasite/Play/4654bfa838624de894085bf54678848f1d|]][[https://mediasite.video.ufl.edu/Mediasite/Play/4654bfa838624de894085bf54678848f1d|overview video]]. | ||

===Proper use of Login Nodes=== | |||

* Generally speaking, interactive work other than managing jobs and data is ''discouraged'' on the login nodes. | |||

* Login nodes are intended for file and job management, and ''short-duration'' testing and development. | |||

[[File:Login node use limits.png|thumb|right|"Acceptable login server use limits"]] | |||

See [[Development_and_Testing|more information here]]. | See [[Development_and_Testing|more information here]]. | ||

{{Note| | |||

Login server acceptable use limits: | |||

* No more than '''16-cores''' | |||

* No longer than '''10 minutes (wall time)''' | |||

* No more than '''64 GB of RAM'''|warn}} | |||

===Resources for Scheduling a Job=== | |||

For use beyond what is acceptable on the login servers, you can request resources on development servers, GPUs servers, through JupyterHub, Galaxy, Graphical User Interface servers via open on demand or submit batch jobs. All of these services work with the scheduler to allocate your requested resources so that your computations run efficiently and do not impact other users. | For use beyond what is acceptable on the login servers, you can request resources on development servers, GPUs servers, through JupyterHub, Galaxy, Graphical User Interface servers via open on demand or submit batch jobs. All of these services work with the scheduler to allocate your requested resources so that your computations run efficiently and do not impact other users. | ||

| Line 77: | Line 100: | ||

* [https://jhub.rc.ufl.edu/ Jupyter Hub] | * [https://jhub.rc.ufl.edu/ Jupyter Hub] | ||

===Scheduling a Job=== | |||

# Understand the resources that your analysis will use: | # Understand the resources that your analysis will use: | ||

#* '''CPUs''': Can your job use multiple CPU cores? Does it scale? | #* '''CPUs''': Can your job use multiple CPU cores? Does it scale? | ||

| Line 85: | Line 108: | ||

# Request those resources: | # Request those resources: | ||

#* [[Sample SLURM Scripts|See sample job scripts]] | #* [[Sample SLURM Scripts|See sample job scripts]] | ||

#* Watch the HiPerGator: SLURM Submission Scripts training video. This video is approximately | #* Watch the HiPerGator: SLURM Submission Scripts training video. This video is approximately 35 minutes and includes a demonstration [[File:Play_icon.png|frameless|30px|link=https://mediasite.video.ufl.edu/Mediasite/Play/3fe6e15428ad401e97afa093252e0e151d]] | ||

#* Watch the HiPerGator: SLURM Submission Scripts for MPI Jobs training video. This video is approximately | #* Watch the HiPerGator: SLURM Submission Scripts for MPI Jobs training video. This video is approximately 25 minutes and includes a demonstration [[File:Play_icon.png|frameless|30px|link=https://mediasite.video.ufl.edu/Mediasite/Play/9d09e1dda67a4993b1877b33e40b30b51d]] | ||

#* Open on Demand, JupyterHub and Galaxy all have other mechanisms to request resources as SLURM needs this information to schedule your job. | #* Open on Demand, JupyterHub and Galaxy all have other mechanisms to request resources as SLURM needs this information to schedule your job. | ||

# Submit the Job | |||

#*Either using <code>sbatch</code> on the command line or through on of the interfaces | |||

#*Once your job is submitted, SLURM will check that there are resources available in your group and schedule the job to run | |||

#Run | |||

#*SLURM will work through the queue and run your job | |||

==Module 3: HiPerGator Storage and the Module System for Applications== | |||

===Locations for Storage=== | |||

The storage systems are [[Storage|reviewed on this page]]. [[File:BlueStorageRacks.JPG|250px|right|text-top|alt="Photo of the Blue storage racks"]] | |||

'''Note:''' In the examples below, the text in angled brackets (e.g. <code><user></code>) indicates example text for user-specific information (e.g. <code>/home/albertgator</code>). | |||

# Home Storage: <code>/home/<user></code> | |||

#*Each user has 40GB of space | |||

#* Good for scripts, code and compiled applications | |||

#* '''Do not''' use for job input/output | |||

#*[[Snapshots|Snapshots]] are available for seven days | |||

# Blue Storage: <code>/blue/<group></code> | |||

#* Our highest-performance filesystem | |||

#* All input/output from jobs should go here | |||

# Orange Storage: <code>/orange/<group></code> | |||

#*Slower than /blue | |||

#*'''Not''' intended for large I/O for jobs | |||

#*Primarily for archival purposes | |||

# Red Storage: <code>/red/<group></code> | |||

#*All flash parallel filesystem primarily for HiPerGator AI | |||

#*Space allocated based on need | |||

#*Scratch filesystem with data regularly deleted | |||

===Backup and Quotas=== | |||

[[File:No backup.png|noboarder|right|150px]] | |||

{{Note|Unless purchased separately, '''nothing''' is backed up on HiPerGator!!|error}} | |||

* [https://www.rc.ufl.edu/documentation/procedures/storage/data-protection/ This page describes the tape backup options and costs]. | |||

* Orange and Blue storage quotas are at the group level and based on investment. | |||

** See [https://www.rc.ufl.edu/services/rates/ price sheets] | |||

** [https://www.rc.ufl.edu/services/purchase-request/ Submit a purchase request] | |||

** The <code>blue_quota</code> and <code>orange_quota</code> commands will show your group's current quota and use. | |||

** The <code>home_quota</code> command will show you home directory quota and use. | |||

===Directories=== | |||

[[File:Automount.gif|275px|frame|This gif shows how an <code>ls</code> in <code>/blue</code> does not show the group directory for <code>ufhpc</code>. After a <code>cd</code> into the directory, and back out, it does show. This shows how the directory is automounted on access. Of course, it would be easier to just go directly without stopping in <code>/blue</code> first.|right|text-top|alt="A gif showing how a directory is automounted upon access."]] | |||

{{Note| | |||

* Directories on the Orange and Blue filesystems are automounted--they are only added when accessed. | |||

* Your group's directory may not appear until you access it. | |||

* Do not remove files necessary for your account to function normally, such as the ~/.ssh and /home directories.|warn}} | |||

* '''Remember''' | |||

** Directories may not show up in an <code>ls</code> of <code>/blue</code> or <code>/orange</code> | |||

***If you <code>cd</code> to <code>/blue</code> and type <code>ls</code>, '''you will likely not see your group directory'''. You also cannot tab-complete the path to your group's directory. However, if you add the name of the group directory (e.g. <code>cd /blue/<group>/</code> the directory becomes available and tab-completion functions. | |||

***Of course, there is no need to change directories one step at a time...<code>cd /blue/<group></code>, will get there in one command. | |||

** You may need to type the path in SFTP clients or Globus for your group directory to appear | |||

** You cannot always use tab completion in the shell | |||

===Environment Modules System=== | |||

* HiPerGator uses the [[Modules Basic Usage|lmod]] environment modules system to hide application installation complexity and make it easy to use the installed applications. | |||

* For applications, compilers or interpreters, load the corresponding module | |||

* More on the [[Modules|module system]] | |||

* [[Modules Basic Usage|Basic module use]] | |||

* [[Applications|Installed Applications]] | |||

==Module 4: Common Mistakes and Getting Support== | |||

===Common Mistakes=== | |||

# Running resource intensive applications on the login nodes is against HPG usage policy. Learn more at [[HPC Computation]]. To avoid involuntary policy violation, we recommend to run computational processes using either of the following methods: | |||

#*Submit a batch job: see [[Sample SLURM Scripts|Sample Slurm Scripts]] | |||

#*Request a development session for [[Development and Testing|testing and development]] | |||

# Writing to <code>/home</code> during batch job execution | |||

#*Use <code>/blue/<group></code> for job input/output. See [[Storage]] and [[Conda]]. | |||

#Wasting resources | |||

#*Understand CPU and memory needs of your application | |||

#*Over-requesting resources generally does not make your application run faster--it prevents other users from accessing resources. | |||

#Blindly copying lab mate scripts | |||

#*Make sure you understand what borrowed scripts do | |||

#*Many users copy previous lab mate scripts, but do not understand the details | |||

#*This often leads to wasted resources | |||

#Misunderstanding the group investment limits and the burst QOS | |||

#*Each group has specific limits | |||

#*Burst jobs are run as idle resources are available | |||

#**The [[Account_and_QOS_limits_under_SLURM#Choosing_QOS_for_a_Job|<code>slurmInfo</code>]] command can show your group's investment and current use as well as HPG cluster utilization. | |||

#When using our Python environment modules, attempting to install new Python packages may not work because incompatible packages may get installed into the ~/.local folder and result in errors at run time. If you need to install packages, create a personal or project-specific [[Conda]] environment or request the addition of new packages in existing environment modules via the [https://support.rc.ufl.edu RC Support System]. | |||

===How to get Help=== | |||

See the [[FAQ]] page for these and more hints, answers, and potential pitfalls that you may want to avoid. | |||

See the [[Get Help]] page for more details. In general, | |||

* Submit support requests via the [https://support.rc.ufl.edu/ UFRC Support System] | |||

* For problems with running jobs, provide: | |||

**JobID number(s) | |||

**Filesystem path(s) to job scripts and job logs | |||

**As much detailed information as you can about your problem | |||

*For requests to install an application, provide: | |||

** Name of application | |||

** URL to download the application | |||

==Additional information for students using the cluster for courses== | |||

The instructor will provide a list of students enrolled in the course | |||

Course group names will be in the format <code>pre1234</code> with the 3-letter department prefix and 4-digits course code. | |||

* All sections of a course will typically use the same group name, so the name may be different from the specific course you are enrolled in. | |||

* In the documentation below, substitute the generic <code>pre1234</code> with your particular group name. | |||

Students who do not have a HiPerGator Account will have one created for them | |||

Students who already have a HiPerGator Account will be added to the course group (as a secondary group) | |||

* You can create a folder in the class's <code>/blue/pre1234</code> folder with your GatorLink username | |||

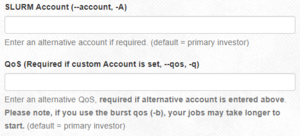

* To use the resources of the class rather than your primary group, use the <code>--account</code> and <code>--qos</code> flags, in the submit script, in the <code>sbatch</code> command or in the boxes in the Open on Demand interface. | |||

*# In your submit script, add these lines:<code><br>#SBATCH --account=pre1234<br>#SBATCH --qos=pre1234</code> | |||

*# In the <code>sbatch</code> command: <code>sbatch --account=pre1234 --qos=pre1234 my_script.sh</code> | |||

*# Using Open on Demand:[[File:OOD.account qos.screenshot.png|thumb|center|How to set account and qos options to use resources from a secondary group]] | |||

*#{{Note|JupyterHub can only use your primary group's resources and cannot be used for accessing secondary group resources. To use Jupyter using your secondary group, please use [[Jupyter|Open on Demand]].|warn}} | |||

Using your account implies agreeing to the [https://www.rc.ufl.edu/about/procedures/ Acceptable use policy]. | |||

Students understand that no restricted data should be used on HiPerGator | |||

Classes are typically allocate 32-cores, 112GB RAM and 2TB of storage | |||

* Instructors should keep this in mind when designing exercises and assignments | |||

* Students should understand that these are shared resources: use them efficiently, share them fairly and know that if everyone waits until the last minute, there may not be enough resources to run all jobs. | |||

All storage should be used for research and coursework only. | |||

Accounts created for the class and the contents of the class <code>/blue/pre1234</code> folder will be deleted at the end of the semester. Please copy anything you want to keep off of the cluster before the end of the semester. | |||

Students should consult with their professor or TA rather than opening a support request. | |||

* Only the professor or TA should open support requests if needed. | |||

Latest revision as of 01:39, 22 January 2025

This page is obsolete. It is being retained for archival purposes. The current version of this page can be found at https://docs.rc.ufl.edu/training/new_user_training/

This page mirrors and expands upon the content provided in the HiPerGator Account Training in Coursera (No longer available).

Please see https://docs.rc.ufl.edu/training/new_user_training/ for the new version of the training.

Effective January 11th, 2021, this training module is required for all new account holders to obtain an account. New accounts will not be created until Coursera successfully completes the training.

Training Objectives

- Recognize the role of Research Computing, utilize HiPerGator as a research tool, and select appropriate resource allocations for analyses

- Login to HiPerGator using an ssh client

- Describe the appropriate use of the login servers and how to request resources for work beyond those limits

- Describe HiPerGator's three main storage systems and the appropriate use for each

- Use the module system for loading application environments

- Locate where to receive user support

- Identify common user mistakes and how to avoid them.

Module 1: Introduction to Research Computing and HiPerGator

HiPerGator

- About 70,000 cores

- Hundreds of GPUs

- 10 Petabytes of storage

- The HiPerGator AI cluster has

- 1,120 NVIDIA A100 GPUs

- 17,000 AMD Rome Epyc Cores

For additional information, visit our website: https://www.rc.ufl.edu/

The Cluster History page has updated information on the current and historical hardware at Research Computing.

Investor Supported

HiPerGator is heavily subsidized by the university, but we do require faculty researchers to make investments for access. Research Computing sell three main products:

- Compute: NCUs (Normalized Compute Units)

- 1 CPU core and 7.8 GB of RAM (updated Jan 2021)

- Storage:

- Blue (

/blue): High-performance storage for most data during analyses - Orange (

/orange): Intended for archival use

- Blue (

- GPUs

- Sold in units of GPU cards

- NCU investment also required to make use of GPU

Investments can either be hardware investments, lasting for 5-years or service investments lasting 3-months or longer.

- Price sheets are located here: https://www.rc.ufl.edu/get-started/price-list/

- Submit a purchase request for:

- For a full explanation of services offered by Research Computing, see: https://www.rc.ufl.edu/services/

Module 2: How to Access and Run Jobs

Cluster Components

Accessing HiPerGator

- jhub.rc.ufl.edu (requires UF Network)

- See also

[video].

[video].

- See also

- galaxy.rc.ufl.edu

- Open on Demand: ood.rc.ufl.edu (requires UF Network)

- See also

[video].

[video].

- See also

Proper use of Login Nodes

- Generally speaking, interactive work other than managing jobs and data is discouraged on the login nodes.

- Login nodes are intended for file and job management, and short-duration testing and development.

Login server acceptable use limits:

- No more than 16-cores

- No longer than 10 minutes (wall time)

- No more than 64 GB of RAM

Resources for Scheduling a Job

For use beyond what is acceptable on the login servers, you can request resources on development servers, GPUs servers, through JupyterHub, Galaxy, Graphical User Interface servers via open on demand or submit batch jobs. All of these services work with the scheduler to allocate your requested resources so that your computations run efficiently and do not impact other users.

- Development Servers

- GPU Servers

- Galaxy

- SLURM Scheduler Sample Scripts

- GUI servers, including Open on Demand

- Jupyter Hub

Scheduling a Job

- Understand the resources that your analysis will use:

- CPUs: Can your job use multiple CPU cores? Does it scale?

- Memory: How much RAM will it use? Requesting more will not make your job run faster!

- GPUs: Does your application use GPUs?

- Time: How long will it run?

- Request those resources:

- See sample job scripts

- Watch the HiPerGator: SLURM Submission Scripts training video. This video is approximately 35 minutes and includes a demonstration

- Watch the HiPerGator: SLURM Submission Scripts for MPI Jobs training video. This video is approximately 25 minutes and includes a demonstration

- Open on Demand, JupyterHub and Galaxy all have other mechanisms to request resources as SLURM needs this information to schedule your job.

- Submit the Job

- Either using

sbatchon the command line or through on of the interfaces - Once your job is submitted, SLURM will check that there are resources available in your group and schedule the job to run

- Either using

- Run

- SLURM will work through the queue and run your job

Module 3: HiPerGator Storage and the Module System for Applications

Locations for Storage

The storage systems are reviewed on this page.

Note: In the examples below, the text in angled brackets (e.g. <user>) indicates example text for user-specific information (e.g. /home/albertgator).

- Home Storage:

/home/<user>- Each user has 40GB of space

- Good for scripts, code and compiled applications

- Do not use for job input/output

- Snapshots are available for seven days

- Blue Storage:

/blue/<group>- Our highest-performance filesystem

- All input/output from jobs should go here

- Orange Storage:

/orange/<group>- Slower than /blue

- Not intended for large I/O for jobs

- Primarily for archival purposes

- Red Storage:

/red/<group>- All flash parallel filesystem primarily for HiPerGator AI

- Space allocated based on need

- Scratch filesystem with data regularly deleted

Backup and Quotas

- This page describes the tape backup options and costs.

- Orange and Blue storage quotas are at the group level and based on investment.

- See price sheets

- Submit a purchase request

- The

blue_quotaandorange_quotacommands will show your group's current quota and use. - The

home_quotacommand will show you home directory quota and use.

Directories

ls in /blue does not show the group directory for ufhpc. After a cd into the directory, and back out, it does show. This shows how the directory is automounted on access. Of course, it would be easier to just go directly without stopping in /blue first.- Directories on the Orange and Blue filesystems are automounted--they are only added when accessed.

- Your group's directory may not appear until you access it.

- Do not remove files necessary for your account to function normally, such as the ~/.ssh and /home directories.

- Remember

- Directories may not show up in an

lsof/blueor/orange- If you

cdto/blueand typels, you will likely not see your group directory. You also cannot tab-complete the path to your group's directory. However, if you add the name of the group directory (e.g.cd /blue/<group>/the directory becomes available and tab-completion functions. - Of course, there is no need to change directories one step at a time...

cd /blue/<group>, will get there in one command.

- If you

- You may need to type the path in SFTP clients or Globus for your group directory to appear

- You cannot always use tab completion in the shell

- Directories may not show up in an

Environment Modules System

- HiPerGator uses the lmod environment modules system to hide application installation complexity and make it easy to use the installed applications.

- For applications, compilers or interpreters, load the corresponding module

- More on the module system

- Basic module use

- Installed Applications

Module 4: Common Mistakes and Getting Support

Common Mistakes

- Running resource intensive applications on the login nodes is against HPG usage policy. Learn more at HPC Computation. To avoid involuntary policy violation, we recommend to run computational processes using either of the following methods:

- Submit a batch job: see Sample Slurm Scripts

- Request a development session for testing and development

- Writing to

/homeduring batch job execution - Wasting resources

- Understand CPU and memory needs of your application

- Over-requesting resources generally does not make your application run faster--it prevents other users from accessing resources.

- Blindly copying lab mate scripts

- Make sure you understand what borrowed scripts do

- Many users copy previous lab mate scripts, but do not understand the details

- This often leads to wasted resources

- Misunderstanding the group investment limits and the burst QOS

- Each group has specific limits

- Burst jobs are run as idle resources are available

- The

slurmInfocommand can show your group's investment and current use as well as HPG cluster utilization.

- The

- When using our Python environment modules, attempting to install new Python packages may not work because incompatible packages may get installed into the ~/.local folder and result in errors at run time. If you need to install packages, create a personal or project-specific Conda environment or request the addition of new packages in existing environment modules via the RC Support System.

How to get Help

See the FAQ page for these and more hints, answers, and potential pitfalls that you may want to avoid. See the Get Help page for more details. In general,

- Submit support requests via the UFRC Support System

- For problems with running jobs, provide:

- JobID number(s)

- Filesystem path(s) to job scripts and job logs

- As much detailed information as you can about your problem

- For requests to install an application, provide:

- Name of application

- URL to download the application

Additional information for students using the cluster for courses

The instructor will provide a list of students enrolled in the course

Course group names will be in the format pre1234 with the 3-letter department prefix and 4-digits course code.

- All sections of a course will typically use the same group name, so the name may be different from the specific course you are enrolled in.

- In the documentation below, substitute the generic

pre1234with your particular group name.

Students who do not have a HiPerGator Account will have one created for them Students who already have a HiPerGator Account will be added to the course group (as a secondary group)

- You can create a folder in the class's

/blue/pre1234folder with your GatorLink username - To use the resources of the class rather than your primary group, use the

--accountand--qosflags, in the submit script, in thesbatchcommand or in the boxes in the Open on Demand interface.- In your submit script, add these lines:

#SBATCH --account=pre1234

#SBATCH --qos=pre1234 - In the

sbatchcommand:sbatch --account=pre1234 --qos=pre1234 my_script.sh - Using Open on Demand:

How to set account and qos options to use resources from a secondary group - JupyterHub can only use your primary group's resources and cannot be used for accessing secondary group resources. To use Jupyter using your secondary group, please use Open on Demand.

- In your submit script, add these lines:

Using your account implies agreeing to the Acceptable use policy. Students understand that no restricted data should be used on HiPerGator Classes are typically allocate 32-cores, 112GB RAM and 2TB of storage

- Instructors should keep this in mind when designing exercises and assignments

- Students should understand that these are shared resources: use them efficiently, share them fairly and know that if everyone waits until the last minute, there may not be enough resources to run all jobs.

All storage should be used for research and coursework only.

Accounts created for the class and the contents of the class /blue/pre1234 folder will be deleted at the end of the semester. Please copy anything you want to keep off of the cluster before the end of the semester.

Students should consult with their professor or TA rather than opening a support request.

- Only the professor or TA should open support requests if needed.