Difference between revisions of "Parallel Computing"

| Line 41: | Line 41: | ||

* Sometimes called CC-UMA - Cache Coherent UMA. Cache coherent means if one processor updates a location in shared memory, all the other processors know about the update. Cache coherency is accomplished at the hardware level. | * Sometimes called CC-UMA - Cache Coherent UMA. Cache coherent means if one processor updates a location in shared memory, all the other processors know about the update. Cache coherency is accomplished at the hardware level. | ||

| − | + | ||

| − | File:Shared_Memory_UMA.png | + | [[File:Shared_Memory_UMA.png|500px]] |

| − | + | ||

Non-Uniform Memory Access (NUMA): | Non-Uniform Memory Access (NUMA): | ||

| Line 52: | Line 52: | ||

* Memory access across link is slower | * Memory access across link is slower | ||

* If cache coherency is maintained, then may also be called CC-NUMA - Cache Coherent NUMA | * If cache coherency is maintained, then may also be called CC-NUMA - Cache Coherent NUMA | ||

| + | |||

| + | |||

| + | [[File:Shared_Memory_NUMA.png|500px]] | ||

Revision as of 23:11, 27 February 2015

Parallel Computing

Parallel computing refers to running multiple computational tasks simultaneously. The idea behind it is based on the assumption that a big computational task can be divided into smaller tasks which can run concurrently.

Types of parallel computing

Parallel computing is used only for the last row of below table;

| Single Instruction | Multiple Instructions | Single Program | Multiple Programs | |

|---|---|---|---|---|

| Single Data | SISD | MISD | ||

| Multiple Data | SIMD | MIMD | SPMD | MPMD |

In more details;

- Data-parallel(SIMD): Same operations/instructions are carried out on different data items, simultaneously.

- Task Parallel(MIMD): Different instructions on different data carried out concurrently.

- SPMD: Single program, multiple data, not synchronized at individual operation level

SPMD and MIMD are essentially the same because any MIMD can be made SPMD. SIMD is also equivalent, but in a less practical sense. MPI (Message Passing Interface) is primarily used for SPMD/MIMD.

Shared memory is the memory which all the processors can access. In hardware point of view it means all the processors have direct access to the common physical memory through bus based (usually using wires) access. These processors can work independently while they all access the same memory. Any change in the variables stored in the memory is visible by all processors because at any given moment all they see is a copy or picture of entire variables stored in the memory and they can directly address and access the same logical memory locations regardless of where the physical memory actually exists.

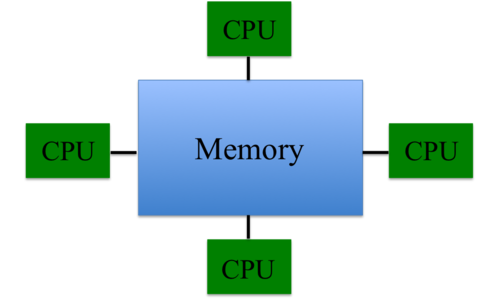

Uniform Memory Access (UMA):

- Most commonly represented today by Symmetric Multiprocessor (SMP) machines

- Identical processors

- Equal access and access times to memory

- Sometimes called CC-UMA - Cache Coherent UMA. Cache coherent means if one processor updates a location in shared memory, all the other processors know about the update. Cache coherency is accomplished at the hardware level.

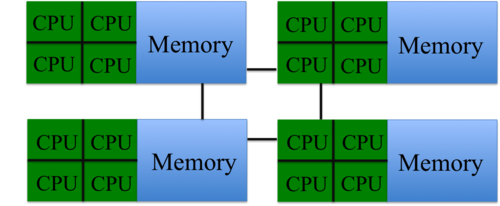

Non-Uniform Memory Access (NUMA):

- Often made by physically linking two or more SMPs

- One SMP can directly access memory of another SMP

- Not all processors have equal access time to all memories

- Memory access across link is slower

- If cache coherency is maintained, then may also be called CC-NUMA - Cache Coherent NUMA

Distributed Memory

Distributed memory in hardware sense, refers to the case where the processors can access other processor's memory only through network. In software sense, it means each processor only can see local machine memory directly and should use communications through network to access memory of the other processors.

Hybrid

Combination of the two kinds of memory is what usually is used in today's fast supercomputers. The hybrid memory system is basically a network of shared memories. Within each shades component, the memory is accessible to all the cpus, and in addition, they can access the tasks and information stored on other units through the network.

Communication in parallel computers

Communications in parallel computing takes advantage of one of the following interfaces;

- OpenMp

- MPI (MPI, OpenMPI)

- Hybrid

OpenMp is used for communication between tasks running concurrently on the same node with access to the shared memory. Assume you have a machine which each one of its nodes contains 16 cores with shared access to 32 GB of memory. If you have an application which is parallelized and can use up to 16 cores, the tasks running on each node will communicate using OpenMP.

Assume use of the same machine; if you want to run the same application on 16 nodes using only one core on each node, communication between different nodes is necessary since memory is not shared between nodes. MPI (Message Passing Interface) utilizes this communication. MPI is used for communication between tasks which use distributed memory.

Again, assume use of the same machine; what if you want to use two nodes (8 cores on each one). The tasks running on each node communicate using OpenMP while the tasks running on different nodes communicate using MPI. This communication style is called hybrid programming since it takes advantage of hybrid memory.

It is common to mistakenly assume OpenMP and OpenMPI are the same! But, OpenMPI is the name of recent MPI versions and should not be mistaken with OpenMP.

Run OpenMP Applications

| Compiler | Compiler Options | Default behavior for # of threads (If not set) |

|---|---|---|

| GNU (gcc, g++, gfortran) | -fopenmp | as many threads as available cores |

| Intel (icc ifort) | -openmp | as many threads as available cores |

| Portland Group (pgcc,pgCC,pgf77,pgf90) | -mp | one thread |

Sample job script;

#!/bin/bash

#

#PBS -N <job_name>

#PBS -M <your_email_address>

#PBS -m abe (mail is sent in case of start(a), begin(b) or error(e) of execution)

#PBS -o <output file name>

#PBS -e <error file name>

#PBS -j oe

#PBS -l nodes=1:ppn=<number of processors>

#PBS -l walltime=<hour:minute:secons>

#PBS -l pmem=<memory gb>

module load <compiler>

module load <other needed modules>

cd $PBS_O_WORKDIR

export OMP_NUM_THREADS=<number of threads>

./executable-path <imput-file-name(s) >output-file-name(s)

Run MPI/Hybrid Applications

Sample job script;

#!/bin/bash

#

#PBS -N <job_name>

#PBS -M <your_email_address>

#PBS -m abe (mail is sent in case of start(a), begin(b) or error(e) of execution)

#PBS -o <output file name>

#PBS -e <error file name>

#PBS -j oe

#PBS -l nodes=<number of nodes>:ppn=<number of processors>:infiniband

#PBS -l walltime=<hour:minute:secons>

#PBS -l pmem=<memory gb>

module load <compiler>

module load <other needed modules>

cd $PBS_O_WORKDIR

mpiexec executable-path <imput-file-name(s) >output-file-name(s)