Account and QOS limits under SLURM

Every group on HiPerGator (HPG) must have an investment with a corresponding hardware allocation to be able to do any work on HPG. Each allocation is associated with a scheduler account. Each account has two quality of service (QOS) levels - high-priority investment QOS and a low-priority burst QOS. The latter allows short-term borrowing of unused resources from other groups' accounts. In turn, each user in a group has a scheduler account association. In the end, it is this association which determines which QOSes are available to a particular user. Users with secondary Linux group membership will have associations with QOSes from their secondary groups.

In summary, each HPG user has scheduler associations with group account based QOSes that determine what resources are available to the users's jobs. These QOSes can be thought of as pools of computational (CPU cores), memory (RAM), maximum run time (time limit) resources with associated starting priority levels that can be consumed by jobs to run applications according to QOS levels, which we will review below.

Account and QOS

Using the resources from a secondary group

By default, when you submit a job on HiPerGator, it will use the resources from your primary group. You can easily see your primary and secondary groups with the id command:

[agator@login4 ~]$ id uid=12345(agator) gid=12345(gator-group) groups=12345(gator-group),12346(orange-group),12347(blue-group) [agator@login4 ~]$

As shown above, our fictional user agator's primary group is gator-group and they also have secondary groups of orange-group and blue-group.

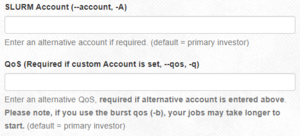

To use the resources of one of their secondary groups rather than their primary group, agator can use the --account and --qos flags, in the submit script, in the sbatch command or in the boxes in the Open on Demand interface.

For example, to use the orange-group they could:

- In a submit script, add these lines:

#SBATCH --account=orange-group

#SBATCH --qos=orange-group - In the

sbatchcommand:sbatch --account=orange-group --qos=orange-group my_script.sh - Using Open on Demand:

- Note: Jupyterhub can only use your primary group's resources and cannot be used for accessing secondary group resources.

See your associations

On the command line, you can view your SLURM associations with the showAssoc command:

$ showAssoc <username>

Example: $ showAssoc magitz output:

User Account Def Acct Def QOS QOS ------------------ ---------- ---------- --------- ---------------------------------------- magitz zoo6927 ufhpc ufhpc zoo6927,zoo6927-b magitz ufhpc ufhpc ufhpc ufhpc,ufhpc-b magitz soltis ufhpc soltis soltis,soltis-b magitz borum ufhpc borum borum,borum-b

The output shows that the user magitz has four account associations and 8 different QOSes. By convention, a user's default account is always the account of their primary group. Additionally, their default QOS is the investment (high-priority) QOS. If a user does not explicitly request a specific account and QOS, the user's default account and QOS will be assigned to the job.

If the user magitz wanted to use the borum group's account - which he has access by virtue of the borum account association - he would specify the account and the chosen QOS in his batch script as follows:

#SBATCH --account=borum #SBATCH --qos=borum

Or, for the burst QOS:

#SBATCH --account=borum #SBATCH --qos=borum-b

Note that both --account and --qos must be specified. Otherwise scheduler will assume the default ufhpc account is intended, and neither the borum nor borum-b QOSes will be available to the job. Consequently, scheduler would deny the submission. In addition, you cannot mix and match resources from different allocations.

These sbatch directives can also be given as command line arguments to srun. For example:

$ srun --account=borum --qos=borum-b <example_command>

QOS Resource Limits

CPU cores, Memory (RAM), GPU accelerators, software licenses, etc. are referred to as Trackable Resources (TRES) by the scheduler. The TRES available in a given QOS are determined by the group's investments and the QOS configuration.

View a trackable resource limits for a QOS:

$ showQos <specified_qos>

Example: $ showQos borum output:

Name Descr GrpTRES GrpCPUs -------------------- ------------------------------ --------------------------------------------- -------- borum borum qos cpu=9,gres/gpu=0,mem=32400M 9

We can see that the borum investment QOS has a pool of 9 CPU cores, 32GB of RAM, and no GPUs. This pool of resources is shared among all members of the borum group.

Similarly, the borum-b> burst QOS resource limits shown by $ showQos borum-b are:

Name Descr GrpTRES GrpCPUs -------------------- ------------------------------ --------------------------------------------- -------- borum-b borum burst qos cpu=81,gres/gpu=0,mem=291600M 81

There are additional base priority and run time limits associated with QOSes. To display them run

$ sacctmgr show qos format="name%-20,Description%-30,priority,maxwall" <specified_qos>

Example: $ sacctmgr show qos format="name%-20,Description%-30,priority,maxwall" borum borum-b output:

Name Descr Priority MaxWall -------------------- ------------------------------ ---------- ----------- borum borum qos 36000 31-00:00:00 borum-b borum burst qos 900 4-00:00:00

The investment and burst QOS jobs are limited to 31 and 4 day run times, respectively. Additionally, the base priority of a burst QOS job is 1/40th that of an investment QOS job. It is important to remember that the base priority is only one component of the jobs overall priority and that the priority will change over time as the job waits in the queue.

The burst QOS cpu and memory limits are nine times (9x) those of the investment QOS up to a certain limit and are intended to allow groups to take advantage of unused resources short periods of time by borrowing resources from other groups.

QOS Time Limits

- Jobs with longer time limits are more difficult to schedule.

- Long time limits make system maintenance harder. We have to perform maintenance on the systems (OS updates, security patches, etc.). If the allowable time limits were longer, it could make important maintenance tasks virtually impossible. Of particular importance is the ability to install security updates on the systems quickly and efficiently. If we cannot install them because user jobs are running for months at a time, we have to choose to either kill the user jobs or risk security issues on the system, which could affect all users.

- The longer a job runs, the more likely it is to end prematurely due to random hardware failure.

Thus, if the application allows saving and resuming the analysis it is recommended that instead of running jobs for extremely long times, you utilize checkpointing of your jobs so that you can restart them and run shorter jobs instead.

The 31 day investment QOS time limit on HiPerGator is generous compared to other major institutions. Here are examples we were able to find.

| Institution | Maximum Runtime |

|---|---|

| New York University | 4 days |

| University of Southern California | 2 weeks for 1 node, otherwise 1 day |

| PennState | 2 weeks for up to 32 cores (contributors), 4 days for up to 256 cores otherwise |

| UMBC | 5 days |

| TACC: Stampede | 2 days |

| TACC: Lonestar | 1 day |

| Princeton: Della | 6 days |

| Princeton: Hecate | 15 days |

| University of Maryland | 14 days |

CPU cores and Memory (RAM) Resource Use

CPU cores and RAM are allocated to jobs independently as requested in job scripts. Considerations for selecting how many CPU cores and how much memory to request for a job must take into account the QOS limits based on the group investment, the limitations of the hardware (compute nodes), and the desire to be a good neighbor on a shared resource like HiPerGator to ensure that system resources are allocated efficiently, used fairly, and everyone has a chance to get their work done without causing negative impacts on work performed by other researchers.

HiPerGator consists of many interconnected servers (compute nodes). The hardware resources of each compute node, including CPU cores, memory, memory bandwidth, network bandwidth, and local storage are limited. If any single one of the above resources is fully consumed the remaining unused resources can become effectively wasted, which makes it progressively harder or even impossible to achieve the shared goals of Research Computing and the UF Researcher Community stated above. See the Available Node Features for details on the hardware on compute nodes. Nodes with similar hardware are generally separated into partitions. If the job requires larger nodes or particular hardware make sure to explicitly specify a partition. Example:

--partition=bigmem

When a job is submitted, if no resource request is provided, the default limits of 1 CPU core, 600MB of memory, and a 10 minute time limit will be set on the job by the scheduler. Check the resource request if it's not clear why the job ended before the analysis was done. Premature exit can be due to the job exceeding the time limit or the application using more memory than the request.

Run testing jobs to find out what resource a particular analysis needs. To make sure that the analysis is performed successfully without wasting valuable resources you must specify both the number of CPU cores and the amount of memory needed for the analysis in the job script. See Sample SLURM Scripts for examples of specifying CPU core requests depending on the nature of the application running in a job. Use --mem (total job memory on a node) or --mem-per-cpu (per-core memory) options to request memory. Use --time to set a time limit to an appropriate value within the QOS limit.

As jobs are submitted and the resources under a particular account are consumed the group may reach either the CPU or Memory group limit. The group has consumed all cores in a QOS if the scheduler shows QOSGrpCpuLimit or memory if the scheduler shows QOSGrpMemLimit in the reason a job is pending ('NODELIST(REASON)' column of the squeue command output).

Example:

JOBID PARTITION NAME USER ST TIME NODES NODELIST(REASON)

123456 bigmem test_job jdoe PD 0:00 1 (QOSGrpMemLimit)

Reaching a resource limit of a QOS does not interfere with job submission. However, the jobs with this reason will not run and will remain in the pending state until the QOS use falls below the limit.

If the resource request for submitted job is impossible to satisfy within either the QOS limits or HiPerGator compute node hardware for a particular partition the scheduler will refuse the job submission altogether and return the following error message,

sbatch: error: Batch job submission failed: Job violates accounting/QOS policy

(job submit limit, user's size and/or time limits)

GPU Resource Limits

As per the Scheduler/Job Policy, there is no burst QOS for GPU jobs.

Choosing QOS for a Job

When choosing between the high-priority investment QOS and the 9x larger low-priority burst QOS, you should start by considering the overall resource requirements for the job. For smaller allocations the investment QOS may not be large enough for some jobs, whereas for other smaller jobs the wait time in the burst QOS could be too long. In addition, consider the current state of the account you are planning to use for your job.

To show the status of any SLURM account as well as the overall usage of HiPerGator resources, use the following command from the ufrc environment module:

$ slurmInfo

for the primary account or

$ slurmInfo <account>

for another account

Example: $ slurmInfo ufgi:

----------------------------------------------------------------------

Allocation summary: Time Limit Hardware Resources

Investment QOS Hours CPU MEM(GB) GPU

----------------------------------------------------------------------

ufgi 744 150 527 0

----------------------------------------------------------------------

CPU/MEM Usage: Running Pending Total

CPU MEM(GB) CPU MEM(GB) CPU MEM(GB)

----------------------------------------------------------------------

Investment (ufgi): 100 280 0 0 100 280

----------------------------------------------------------------------

HiPerGator Utilization

CPU: Used (%) / Total MEM(GB): Used (%) / Total

----------------------------------------------------------------------

Total : 43643 (92%) / 47300 113295500 (57%) / 196328830

----------------------------------------------------------------------

* Burst QOS uses idle cores at low priority with a 4-day time limit

Run 'slurmInfo -h' to see all available options

The output shows that the investment QOS for the ufgi account is actively used. Since 100 CPU cores out of 150 available are used only 50 cores are available. In the same vein since 280GB out of 527GB in the investment QOS are used 247GB are still available. The ufgi-b burst QOS is unused. Te total HiPerGator use is 92% of all CPU cores and 57% of all memory on compute nodes, which means that there is little available capacity from which burst resources can be drawn. In this case a job submitted to the ufgi-b QOS would likely take a long time to start. If the overall utilization was below 80% it would be easier to start a burst job within a reasonable amount of time. When the HiPerGator load is high, or if the burst QOS is actively used, the investment QOS is more appropriate for a smaller job.

Examples

A hypothetical group ($GROUP in the examples below) has an investment of 42 CPU cores and 148GB of memory. That's the group's so-called soft limit for HiPerGator jobs in the investment qos for up to 744 hour time limit at high priority. The hard limit accessible through the so-called burst qos is 9 times that giving a group potentially a total of 10x the invested resources i.e. 420 total CPU cores and 1480GB of total memory with burst qos providing 378 CPU cores and 1330GB of total memory for up to 96 hours at low base priority.

Let's test:

[marvin@gator ~]$ srun --mem=126gb --pty bash -i srun: job 123456 queued and waiting for resources #Looks good, let's terminate the request with Ctrl+C> ^C srun: Job allocation 123456 has been revoked srun: Force Terminated job 123456

On the other hand, going even 1gb over that limit results in the already encountered job limit error

[marvin@gator ~]$ srun --mem=127gb --pty bash -i srun: error: Unable to allocate resources: Job violates accounting/QOS policy (job submit limit, user's size and/or time limits

At this point the group can try using the 'burst' QOS with

#SBATCH --qos=$GROUP-b

Let's test:

[marvin@gator3 ~]$ srun -p bigmem --mem=400gb --time=96:00:00 --qos=$GROUP-b --pty bash -i srun: job 123457 queued and waiting for resources #Looks good, let's terminate with Ctrl+C ^C srun: Job allocation 123457 has been revoked srun: Force Terminated job 123457

However, now there's the burst qos time limit to consider.

[marvin@gator ~]$ srun --mem=400gb --time=300:00:00 --pty bash -i srun: error: Unable to allocate resources: Job violates accounting/QOS policy (job submit limit, user's size and/or time limits

Let's reduce the time limit to what burst qos supports and try again.

[marvin@gator ~]$ srun --mem=400gb --time=96:00:00 --pty bash -i srun: job 123458 queued and waiting for resources #Looks good, let's terminate with Ctrl+C ^C srun: Job allocation 123458 has been revoked srun: Force Terminated job

Pending Job Reasons

To reiterate, the following Reasons can be seen in the NODELIST(REASON) column of the squeue command when the group reaches the resource limit for a QOS:

- QOSGrpCpuLimit

All CPU cores available for the listed account within the respective QOS are in use.

- QOSGrpMemLimit

All memory available for the listed account within the respective QOS as described in the previous section is in use.

- Note

Once it has marked any jobs in the group's list of pending jobs with a reason of QOSGrpCpuLimit or QOSGrpMemLimit, SLURM may not evaluate other jobs and they may simply be listed with the Priority reason code.